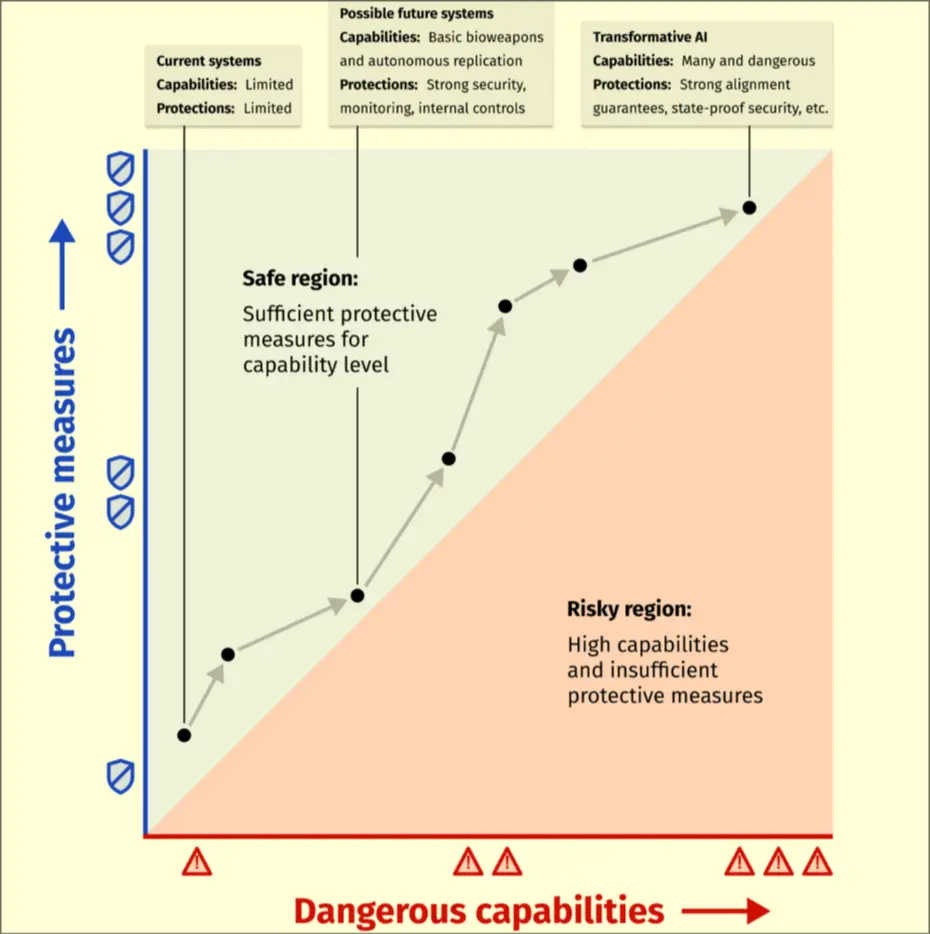

The policy that says not to scale unless we are entirely sure we can make what we build safe.

RSP Structure

A good RSP should cover:

- Limits: what capabilities would indicate unsafe scaling

- Protections: what measures are necessary to contain risks

- Evaluations: what procedures used to catch warning signs of dangerous capability

- Response: set of principles to use to pause AI progress

- Accountability: how AI developer can ensure RSP commitments are executed and how people can verify

RSP Falloffs

- Slower development, to keep up with competition, you must reduce the RSP scope

- Requires consistent evaluations that sound false alarms

- GA